wspy – added support for –memstats

I have added support to wspy for the –memstats option.

This option periodically samples /proc/meminfo and then dumps a *.csv file with these values. So far, I’ve chosen the following fields to sample:

MemTotal MemFree Buffers Cached Active(anon) Inactive(anon) Active(file) Inactive(file) SwapTotal SwapFree Dirty Writeback AnonPages Mapped Shmem Slab CommitLimit Committed_AS

While some of these like MemTotal shouldn’t change, it is easier to just sample and then dump into the CSV file with everything else than to special case them.

I ran the tests below on a run of OpenFOAM motorbike with 4 and 8 threads. The latter case has a significantly higher amount of system time and hence I’m looking to see if this might be reflected in memory graphs

Running snappyHexMesh in parallel on /home/mev/openfoam-motorbike/bench_template/run_4 using 4 processes real 14m41.509s user 58m22.617s sys 0m12.798s Running snappyHexMesh in parallel on /home/mev/openfoam-motorbike/bench_template/run_8 using 8 processes real 15m24.120s user 85m54.951s sys 20m29.949s

An example of each of the six plots created is shown below. Still need to see how various workloads come out to calibrate what is most useful here.

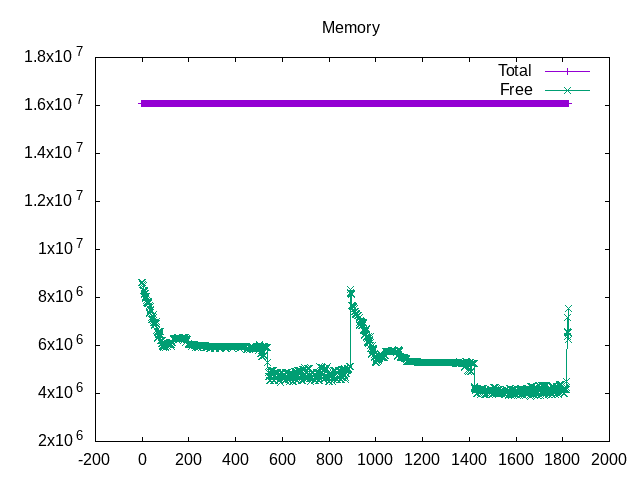

This first plot is a simple plot of the free memory (MemFree).

This next plot brings in file buffers and caches (contributors to free memory) and traditional fields for the “free” command output.

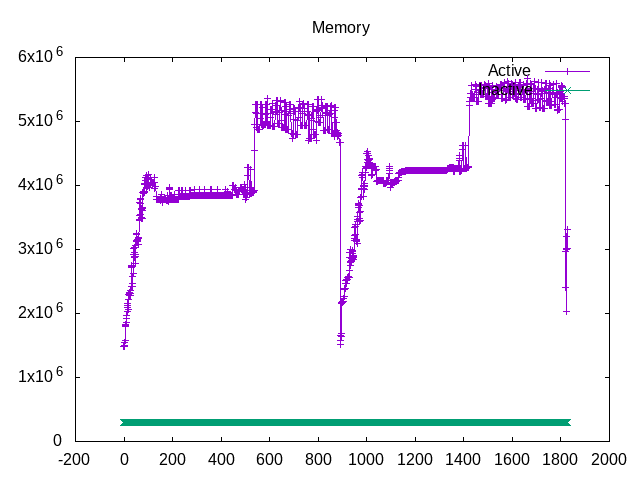

One can ask for active(anon) and Inactive(anon) for normal pages. For now an inverse of the free values.

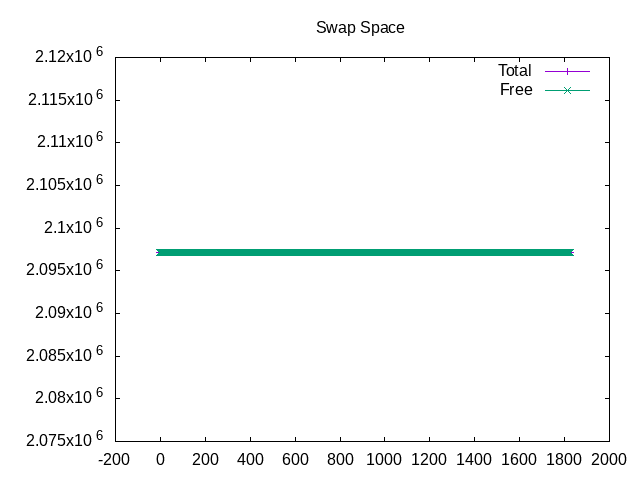

Swap space is somewhat boring, both because a small amount is configured and there is enough memory that none is used.

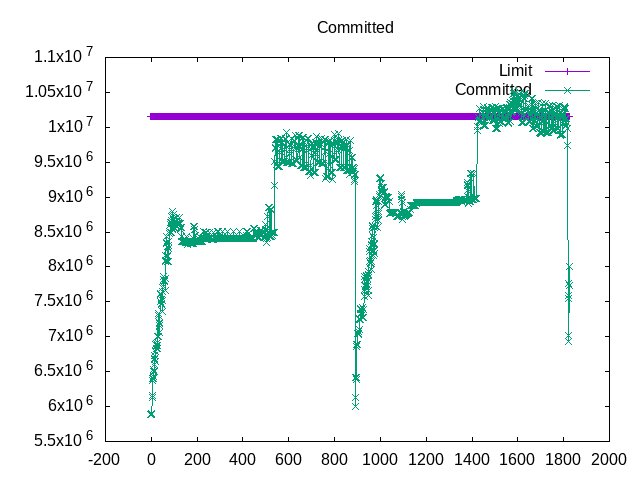

The CommitLimit and Committed_AS fields are interesting, particularly to understand what might be going on as those limits are being exceeded. Is this contributing to the larger amount of system time?

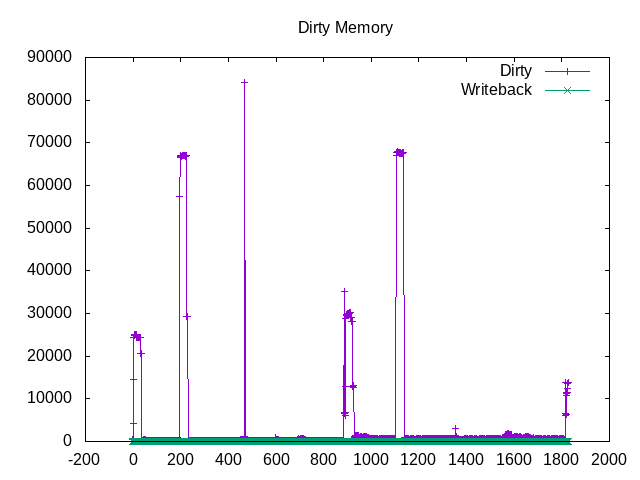

The scale will be important to calibrate this one, but shows the dirty pages as they are being written out to disk. I would expect this to be a bigger issue if the disk falls behind.

Comments

wspy – added support for –memstats — No Comments

HTML tags allowed in your comment: <a href="" title=""> <abbr title=""> <acronym title=""> <b> <blockquote cite=""> <cite> <code> <del datetime=""> <em> <i> <q cite=""> <s> <strike> <strong>